Commands used in this guide

Learn more about all the aspects of the balancing & ranking system in BAR.

Rating System

OpenSkill

(https://openskill.me/) is the algorithm we use for BAR's multiplayer rating system. When you win or lose a match, your rating is adjusted.

TrueSkill

You might see players mention True Skill(https://trueskill.org/), which is the previous system we used. TrueSkill and OpenSkill both rely on Bayesian inference to model and update players’ rating and as such are very similar. If you are familiar with one; the vast majority of what you know can be assumed about the other.

A separate rating is given for each type of match:

- Duel (1v1)

- Small Teams (2v2 - 5v5)

- Large Teams (6v6+)

- Free For All (FFA)

- Team FFA

Skill (mu)

Skill (denoted mu) is a numeric value representing the estimated capability of a player. The higher a player’s estimated skill the more likely they are able to win BAR matches against players with lower estimated skill. Winning matches increases your skill, whilst losing reduces it, although matches with many players (such as 8v8) will result in smaller changes in skill value, as it is more difficult to determine each player’s contribution to a match victory or loss.

Defeating opponents with higher ratings than your own will result in larger gains and vice versa when losing against a lower skill opponent.

This is the distribution of Skill at the time of writing. With many new players, you can see most are still near the starting value of 25.

.svg)

Uncertainty (sigma)

Uncertainty (denoted sigma) is a measure of how confident the rating model is of your skill level. You start with an uncertainty of 8.33, which then decreases as you play more matches. A higher uncertainty also means skill changes more quickly after each match.

An example showing uncertainty around a player’s skill below represents the likelihood that an Experienced player with a high skill and around 100 games played will beat either a completely new player (moderate certainty of winning) or a Veteran player with lower skill but around 1000 games played (high certainty of winning).

.svg)

Match Rating

A player’s Match Rating is used to balance match lobbies for Beyond All Reason. It is calculated by deducting your uncertainty from your skill [mu - 1*sigma]. Typically your uncertainty will change more slowly than your skill so winning will improve this and losing will make your game rating worse. Game rating is used both for balance and for matchmaking.

Here we can see a distribution of player’s game rating. As with the skill this is concentrated around the starting number. If a player has more than 20 Match Rating they’re likely quite capable; above 30 should be considered a very strong player.

.svg)

Leaderboard Rating

Similar to Match Rating, though this rating more greatly factors in uncertainty. Specifically we are using [mu - 3*sigma], which gives us a value that our model predicts with a ~99.85% certainty that the player’s actual skill will be this value or higher. New players start with a Leaderboard Rating of 0. Given that new players start with high uncertainty it is possible for them to lose games, and still gain in leaderboard ratings as their uncertainty decreases.

This leaderboard rating is also the value that is used for rank icons.

.svg)

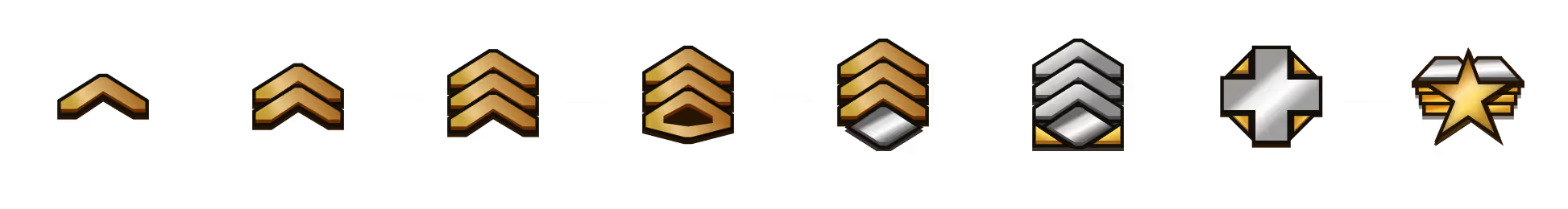

Rank icons

How does my rank icon (the chevrons) work?

Chevrons are based on experience/veterancy and achievements rather than rating. Here is what they mean:

This is a subject to change in the future as we agree on the best convention, although most likely the changes will come after the launch of the new lobby client (see #new-client on Discord for details) as the current legacy implementation is very limiting.

Match Rating Example

A matchrating example

“Teifion” has an estimated skill of 31.35 with an uncertainty of 4.82.

This gives Teifion a game rating of 26.53.

Teifion plays against his good friend “Borg_King”.

Borg_King has an estimated skill of 20.57 with an uncertainty of 7.25.

Borg_Kings game rating is thus 13.32.

As expected Teifion wins the game.

Teifion gains 0.58 skill, loses 0.05 uncertainty and gets a new rating of 27.16 (+0.63).

Borg_King loses 1.32 skill and 0.23 uncertainty so his game rating is now 12.24 (-1.08).

Teifion had a lot less uncertainty so his skill changed by less. If this had been a 16 player team game both players would have also seen a smaller change.

Balancing Matches

How does balance work?

For full in-depth details please feel free to view the source code. For everybody else, our algorithm follows a relatively simple process.

- As long as there are 1 or more players left to place on a team:

- Pick the team with the lowest combined rating

- That team picks the highest rated player available

- Go back to 1

Typically in a 2 team game this will mean 1 team gets the highest rated player and the other team gets the next two highest rated players. You can chat $explain to get a listing of exactly how the teams were picked and we welcome further questions on the subject in our discord.

Explain Example

We’ll start with some example $explain output and then break it down part by part. Note, due to server improvements the format and specifics of this data may be different from this guide.

Group matching

> Grouped: Garpike, Hound

--- Rating sum: 97.0

--- Rating Mean: 48.5

--- Rating Stddev: 1.5

> Grouped: Fury, Destroyer

--- Rating sum: 73.0

--- Rating Mean: 36.5

--- Rating Stddev: 1.5

End of pairing

If parties are enabled the output will list something related to “Group matching”, if parties are not enabled or something prevents parties being used then you will instead see “Solo matching”. Grouped matching takes each group wanting to play together and tries to find a comparable group to put them against. If a group cannot be found they will not be placed together. In this example Garpike and Hound are found to be similar to Fury and Destroyer. This means one team gets one group and the other gets the other.

Group picked Garpike, Hound for team 1, adding 97.0 points for new total of 97.0

Group picked Fury, Destroyer for team 2, adding 73.0 points for new total of 73.0

Team 1 got to pick first so they chose the higher rated team, team 2 get the remaining team but will have the lowest combined rating so get to pick next.

Picked Eagle for team 2, adding 36.0 points for new total of 109.0

Picked Calamity for team 1, adding 30.0 points for new total of 127.0

Picked Brute for team 2, adding 25.0 points for new total of 134.0

Picked Arbiter for team 1, adding 20.0 points for new total of 147.0

Here each team has taken it in turns to go through the remaining players, each time the lowest rated team gets to pick their player and in this case it has resulted in alternating picks. It is possible in some situations for a team to get two picks in a row.

Common questions

My rating jumped weirdly between games, why?

Skill, Uncertainty and corresponding Ratings update after each game and new players in particular can experience noticeable jumps in Match Rating as the Openskill Algorithm tries to more accurately represent your current skill. Each game mode has a different rating; Duel, Team and FFA. Switching between these modes will show their respective ratings.

Why can’t new players start with a lower rating?

A very common question and with good reason. New players will often be placed in a game and perform below what their indicated game rating would suggest. Unfortunately there are two reasons we can’t simply put new players at a lower initial rating.

Firstly it would result in lowering the average rating, meaning it is just a temporary fix. We balance games taking into account player uncertainty which helps mitigate the problem.

Secondly we don’t know how good this new player is. They might be brand new to RTS gaming (so likely very bad) or they could be an experienced player from a similar game (they’re going to have some easy first games). New players also start with a high uncertainty, which means their ratings will more quickly change after each game compared to other players.

Why can’t we rate based on hours played?

Hours played is not an accurate indicator of skill. Some players have spent hours against comparatively easy AIs but would perform far worse than their hours would suggest when compared to competitive player vs player matches. Ultimately the only real indicator of skill is winning and losing.

Why can’t we rate based on in-game scores?

Examples of in-game scores include Actions per minute (APM), metal produced, damage dealt and suchlike. While many of these would correlate in favour of good players none of them are a direct indicator of skill. Additionally none of them would be able to represent communicative ability, encouragement or leadership. In some cases they could be misleading, a player who does very little damage but to a very important area might be a linchpin of victory and a player who wasted metal might have produced the most and let their team down.

What really matters is how much a player contributes to winning games. Wins and losses are the best indicator of a player’s overall skill when taken as an average over many games. Using any other metric to influence rating could also shift player motivations, such as trying to farm damage instead of trying to win.

Why does my rating in the balance log not always show correctly?

When we balance a game we will fuzz each rating by a small but random amount. It should be small enough to not noticeably impact your rating but large enough to help prevent the same teams forming every time.

I think we should do...

[insert idea here]

We are thrilled to receive suggestions and ideas. We encourage you to share well-articulated concepts, supported by compelling evidence. Remember, presenting a strong case for your idea can spark the interest of our dedicated developers, making them eager to consider your proposed changes. Join the conversation in the dedicated #openskill channel on our Discord.

Further reading

- The whitepaper for OpenSkill

- Our OpenSkill implementation

- OpenSkill is very similar to an algorithm owned by Microsoft called True Skill

.avif)